Sixth 3D - Realtime 3D engine

Table of Contents

- 1. Introduction

- 2. How take engine into use

- 3. Understanding 3D engine

- 3.1. Main render loop

- 3.2. Coordinate System (X, Y, Z)

- 3.3. Point3D and Vertex

- 3.4. Edge

- 3.5. Face (Triangle)

- 3.6. Normal Vector

- 3.7. Shading & Lighting

- 3.8. Mesh

- 3.9. Perspective correct textures

- 3.10. Frustum & View Frustum Culling

- 3.11. Constructive Solid Geometry

- 3.12. Winding Order & Backface Culling

- 3.13. Working with Colors

- 4. Developer tools

- 5. Source code

1. Introduction

Sixth 3D is a realtime 3D rendering engine written in pure Java. It runs entirely on the CPU — no GPU required, no OpenGL, no Vulkan, no native libraries. Just Java.

The motivation is simple: GPU-based 3D is a minefield of accidental complexity. Drivers are buggy or missing entirely. Features you need aren't supported on your target hardware. You run out of GPU RAM. You wrestle with platform-specific interop layers, shader compilation quirks, and dependency hell. Every GPU API comes with its own ecosystem of pain — version mismatches, incomplete implementations, vendor-specific workarounds. I want a library that "just works".

Sixth 3D takes a different path. By rendering everything in software on the CPU, the entire GPU problem space simply disappears. You add a Maven dependency, write some Java, and you have a 3D scene. It runs wherever Java runs.

This approach is quite practical for many use-cases. Modern systems ship with many CPU cores, and those with unified memory architectures offer high bandwidth between CPU and RAM. Software rendering that once seemed wasteful is now a reasonable choice where you need good-enough performance without the overhead of a full GPU pipeline. Java's JIT compiler helps too, optimizing hot rendering paths at runtime.

Beyond convenience, CPU rendering gives you complete control. You own every pixel. You can freely experiment with custom rendering algorithms, optimization strategies, and visual effects without being constrained by what a GPU API exposes. Instead of brute-forcing everything through a fixed GPU pipeline, you can implement clever, application-specific optimizations.

Sixth 3D is part of the larger Sixth project, with the long-term goal of providing a platform for 3D user interfaces and interactive data visualization. It can also be used as a standalone 3D engine in any Java project. See the demos for examples of what it can do today.

2. How take engine into use

Add the Sixth 3D dependency to your Maven project:

<dependencies> <dependency> <groupId>eu.svjatoslav</groupId> <artifactId>sixth-3d</artifactId> <version>1.4</version> </dependency> </dependencies>

Also add the repository (the library is not on Maven Central):

<repositories> <repository> <id>svjatoslav.eu</id> <name>Svjatoslav repository</name> <url>https://www3.svjatoslav.eu/maven/</url> </repository> </repositories>

- Library requires Java 21 or newer.

- Study the demo applications for practical examples. Start with the minimal example to see the basic boilerplate needed to render a 3D scene.

- Study how Sixth 3D engine works.

- Read online JavaDoc.

- See Sixth 3D class diagrams. (Diagrams were generated by using JavaInspect utility)

3. Understanding 3D engine

3.1. Main render loop

The rendering loop is the heart of the engine, continuously generating frames at a target rate (typically 60 FPS). Each frame transforms 3D shapes through a multi-stage pipeline before displaying them on screen.

The render loop runs on a dedicated background daemon thread managed by ViewPanel, which can optionally sleep between frames to maintain a target FPS or run unlimited for benchmarking.

For a detailed walkthrough of each phase with diagrams and code examples, see the dedicated page: Rendering loop.

3.2. Coordinate System (X, Y, Z)

Sixth 3D uses a left-handed coordinate system with X pointing right and Y pointing down, matching standard 2D screen coordinates. This coordinate system should feel intuitive for people with preexisting 2D graphics background.

| Axis | Direction | Meaning |

|---|---|---|

| X | Horizontal, positive = RIGHT | Objects with larger X appear to the right |

| Y | Vertical, positive = DOWN | Lower Y = higher visually (up) |

| Z | Depth, positive = away from viewer | Negative Z = closer to camera |

Practical Examples

- A point at

(0, 0, 0)is at the origin. - A point at

(100, 50, 200)is: 100 units right, 50 units down visually, 200 units away from the camera. - To place object A "above" object B, give A a smaller Y value than B.

Coordinates in this system are stored using the

Point3D class — a mutable container with public x, y, z fields

supporting vector operations like distance, rotation, and translation.

Vertices (see below) are positioned within this coordinate system.

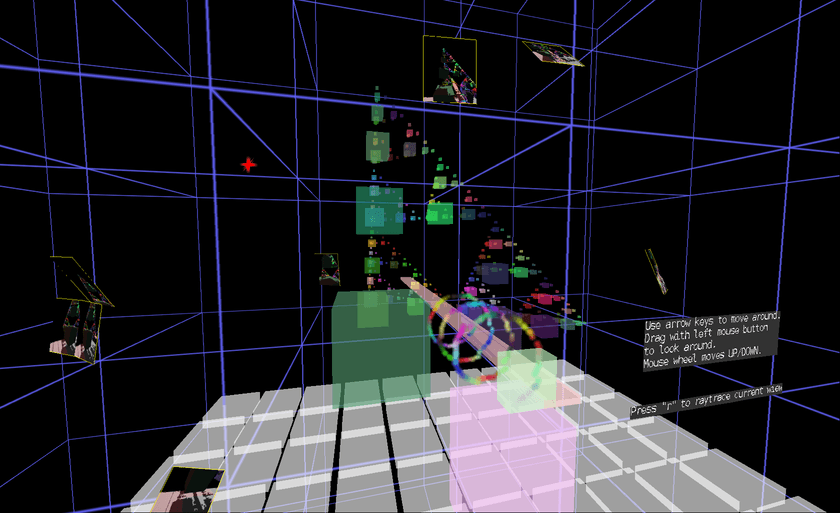

The sixth-3d-demos project includes an interactive coordinate system reference showing X, Y, Z axes as colored arrows with a grid plane for spatial context.

3.3. Point3D and Vertex

Every 3D object is built from vertices — corner points that define the shape's geometry. A triangle has 3 vertices, a cube has 8, and complex meshes have thousands. The engine uses two related classes to represent points in 3D space, each serving a different purpose.

3.3.1. Point3D — Raw Coordinates

Point3D is the fundamental coordinate type throughout the engine. It

stores a position or vector with three public fields: x, y, z.

The class provides vector math operations: distance calculation,

rotation, translation, scaling, dot/cross products, and interpolation. Methods follow a fluent API convention where mutating

operations (like add, multiply) return this for chaining, while

non-mutating variants (like withAdded, withMultiplied) return new

instances.

Use Point3D for:

- Storing positions, vectors, or any raw 3D coordinate

- Distance and angle calculations between points

- Vector math (dot product, cross product, normalization)

- Rotating or translating positions before shape construction

Point3D p1 = new Point3D(100, 50, 200); Point3D p2 = new Point3D(0, 0, 100); double distance = p1.getDistanceTo(p2); // Euclidean distance Point3D direction = p1.subtract(p2).unit(); // Unit vector from p2 to p1 Point3D rotated = p1.rotate(new Point3D(0,0,0), Math.PI/4, 0); // Rotate 45° in XZ plane

3.3.2. Vertex — Rendering-Ready Coordinates

Vertex wraps a Point3D and adds the coordinate spaces needed during

rendering. As a shape transforms through the render pipeline, each

vertex tracks its position in multiple spaces:

| Field | Purpose |

|---|---|

coordinate |

Original position in local/model space |

transformedCoordinate |

Position relative to camera (after transform stack) |

onScreenCoordinate |

2D screen pixels (after perspective projection) |

textureCoordinate |

Optional UV coords in pixel units (not normalized) |

normal |

Optional normal vector for CSG polygon splitting |

During rendering, calculateLocationRelativeToViewer() transforms the

vertex through all spaces: first applying the

TransformStack to get transformedCoordinate, then projecting to 2D

for onScreenCoordinate. Results are cached per-frame to avoid

recomputing for vertices shared across multiple shapes.

Use Vertex when:

- Constructing triangles, polygons, or textured shapes

- Your geometry needs texture UV coordinates

- You're performing CSG boolean operations (requires

normal)

// Create a textured triangle (texture coordinates use pixel units) // For a 256x256 texture: (0,0)=top-left, (256,256)=bottom-right Vertex v1 = new Vertex(new Point3D(0, 0, 100), new Point2D(0, 0)); Vertex v2 = new Vertex(new Point3D(100, 0, 100), new Point2D(256, 0)); Vertex v3 = new Vertex(new Point3D(50, 100, 100), new Point2D(128, 256)); TexturedTriangle triangle = new TexturedTriangle(v1, v2, v3, texture);

3.3.3. When to Use Each

| Use Point3D | Use Vertex |

|---|---|

| Positioning shapes, cameras, lights | Building triangles and polygons |

| Vector math (distances, directions) | Texture-mapped geometry |

| Rotating or translating positions | CSG operations |

| Temporary calculations | Shapes that render through transform pipeline |

For simple shapes without textures, you can pass raw Point3D

coordinates directly to constructors — the shape will internally wrap

them in Vertex objects. The coordinate system above defines the

meaning of all x, y, z values in both classes.

3.4. Edge

An edge is a straight line segment connecting two vertices. Edges form the wireframe skeleton of a 3D model — the structural framework visible when surfaces are not rendered. A triangle has 3 edges, a cube has 12 edges, and complex meshes have thousands.

In Sixth 3D, the Line class implements edges as renderable shapes. Each Line connects two Vertex endpoints and stores two properties: a width in world units (adjusted for perspective during rendering) and a color with alpha transparency. The rendering algorithm switches between two modes based on the projected screen width: thin lines below the threshold are drawn as single pixels with alpha-adjusted coloring, while thicker lines are rendered as filled rectangles with perspective-correct edge fading using four LineInterpolator scanline boundaries.

Wireframe shapes are composite objects built from multiple Line instances. For example, WireframeBox creates 12 Line objects — four edges parallel to each axis — using a LineAppearance factory to ensure consistent styling across all edges. The WireframeCube convenience subclass provides a center-point constructor. See the Shape Gallery demo for a visual comparison of wireframe (edges only) versus solid polygon (surfaces with lighting) rendering modes.

3.5. Face (Triangle)

A face is a flat surface enclosed by edges — the visible skin of a 3D object. While faces can theoretically have any number of sides, 3D engines standardize on triangles because three points always define a flat plane. A quad (4 vertices) or pentagon (5 vertices) might be non-planar depending on vertex positions, causing rendering artifacts. Triangles avoid this problem entirely.

3.5.1. SolidPolygon — Solid-Color Faces

SolidPolygon is the primary face type, supporting any number of vertices (3 or more). For triangles, it renders directly via scanline rasterization. For N-vertex polygons (quads, pentagons, etc.), the engine automatically triangulates using fan decomposition before rendering — a quad becomes 2 triangles, a pentagon becomes 3.

Each SolidPolygon stores a single fill color with optional alpha transparency. When shading is enabled, the lighting manager computes the polygon's illumination once during the transform phase, then applies the shaded color during painting. Backface culling (see Winding Order & Backface Culling) can be enabled per-polygon, or applied recursively to an entire composite shape via AbstractCompositeShape.setBackfaceCulling(true) — this propagates the setting to all SolidPolygon and TexturedTriangle sub-shapes, including nested composites.

// Create a red triangle SolidPolygon triangle = SolidPolygon.triangle( new Point3D(0, 0, 100), new Point3D(50, 0, 100), new Point3D(25, 50, 100), Color.RED ); // Create a blue quad (internally triangulated) SolidPolygon quad = SolidPolygon.quad( new Point3D(-50, -50, 100), new Point3D(50, -50, 100), new Point3D(50, 50, 100), new Point3D(-50, 50, 100), Color.BLUE ); // Enable lighting and culling for a closed mesh quad.setShadingEnabled(true); quad.setBackfaceCulling(true);

3.5.2. TexturedTriangle — UV-Mapped Faces

TexturedTriangle renders faces with image textures mapped via UV

coordinates. Each of the three vertices stores a textureCoordinate

(a Point2D with U and V values in pixel units matching the texture

dimensions). For a 256×256 texture, coordinates range from (0,0) at the

top-left corner to (256,256) at the bottom-right. During rasterization, the

engine interpolates these UV coordinates across the triangle's surface,

sampling the texture at each pixel. When mipmaps are used, the multiplicationFactor

scales coordinates to match the selected mipmap resolution.

The texture system supports mipmaps — pre-scaled versions of the texture selected based on the triangle's screen size to reduce aliasing artifacts on distant surfaces. For large triangles that would show perspective distortion, the engine can tessellate the triangle into smaller pieces for more accurate rendering.

// Create a 256x256 texture Texture texture = new Texture(256, 256, 2); // width, height, maxUpscale // Create a textured triangle with UV coordinates in pixel units Vertex v1 = new Vertex(new Point3D(0, 0, 100), new Point2D(0, 0)); // top-left Vertex v2 = new Vertex(new Point3D(100, 0, 100), new Point2D(256, 0)); // top-right Vertex v3 = new Vertex(new Point3D(50, 100, 100), new Point2D(128, 256)); // bottom-center TexturedTriangle triangle = new TexturedTriangle(v1, v2, v3, texture); triangle.setBackfaceCulling(true);

Both SolidPolygon and TexturedTriangle extend AbstractCoordinateShape, which handles vertex transformation and depth sorting. See the Shape Gallery demo for a visual comparison of solid versus textured polygon rendering.

3.6. Normal Vector

A normal is a vector perpendicular to a surface. It tells the renderer which direction a face is pointing. Normals are critical for lighting — the angle between the light direction and the normal determines how bright a surface appears.

Use cases:

| Use case | API | Computation | Location |

|---|---|---|---|

| BSP/CSG operations | SolidPolygon.getPlane() → Plane.normal | Lazy-cached once | Plane |

| Per-frame shading | Plane.computeNormal() → cachedNormal field |

Recomputed every frame | SolidPolygon |

| Lighting calculation | LightingManager.computeLighting() | Uses normal via dot(L,N) |

LightingManager |

Implementation notes:

- Plane.computeNormal(): shared zero-allocation helper for computing normals from three points

- Plane: stores normals in Hesse normal form (normal + distance) for BSP spatial partitioning

- Vertex.normal: optional field for CSG polygon splitting (not used for rendering)

3.7. Shading & Lighting

Sixth 3D implements flat shading — one normal per polygon, computed from the first three vertices. Each polygon receives a single color based on its orientation relative to light sources.

To understand lighting and shading, read more about shading & lighting.

3.8. Mesh

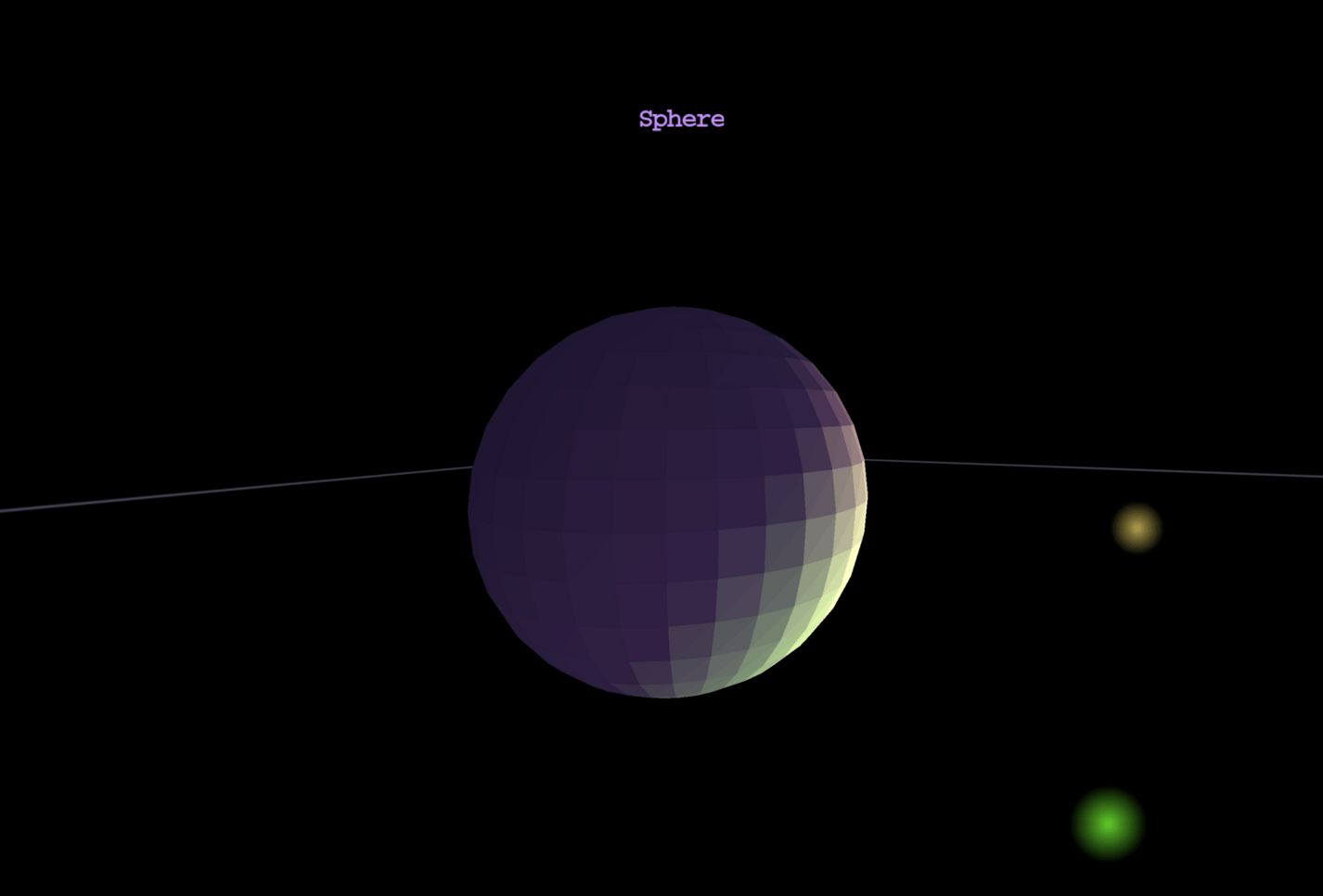

A mesh is a collection of vertices, edges, and faces that together define the shape of a 3D object. Even curved surfaces like spheres are approximated by many small triangles — more triangles means a smoother appearance. A cube has 8 vertices forming 12 triangular faces, while a smooth sphere requires hundreds or thousands of triangles depending on the desired quality.

In Sixth 3D, meshes are built through composition rather than monolithic vertex/index buffers. The AbstractCoordinateShape class is the foundation for primitive shapes — each instance stores its own List<Vertex> directly. This includes SolidPolygon (N-vertex convex polygons, not limited to triangles), TexturedTriangle (UV-mapped triangles), and Line (wireframe edges). The AbstractCompositeShape class groups multiple shapes into a single object with its own position, rotation, and transform — useful for complex models that move or rotate together.

Complex meshes are constructed procedurally by adding primitive shapes during initialization. For example, SolidPolygonSphere generates triangles using a latitude-longitude grid: with 16 segments, it creates approximately 900 SolidPolygon triangles by looping through rings and sectors, calling addShape() for each. The generic SolidPolygonMesh accepts any list of triangles, allowing custom geometry from procedural generation or external sources.

During rendering, several automatic optimizations occur. N-vertex polygons (quads, pentagons, etc.) are triangulated using fan triangulation in AbstractCompositeShape.retessellate(), converting an N-vertex polygon into N-2 triangles. Textured polygons undergo level-of-detail tessellation — distant triangles are subdivided into smaller pieces for perspective-correct texture mapping. Composites perform view frustum culling to skip rendering when entirely off-screen. Sub-shapes can be organized into named groups via SubShape wrappers, allowing showGroup() and hideGroup() to toggle visibility of entire sections. Composite shapes also support CSG boolean operations — see the Constructive Solid Geometry documentation for union, subtract, and intersect operations.

The Shape Gallery demo showcases all primitive shapes available in Sixth 3D, rendered in both wireframe mode (edges only via WireframeBox and similar) and solid polygon mode (filled surfaces with dynamic lighting).

3.9. Perspective correct textures

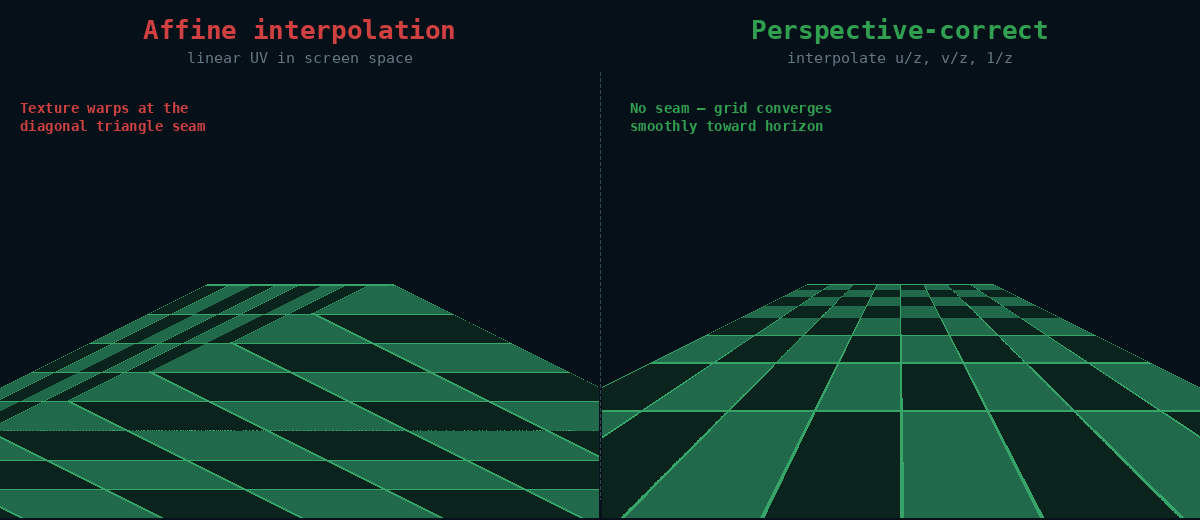

Sixth 3D tries to do perspective-correct texture rendering. Read more about perspective-correct texture implementation.

3.10. Frustum & View Frustum Culling

Sixth 3D implements view frustum culling.

To understand frustum culling and object-level visibility optimization, read more about frustum & view frustum culling.

3.11. Constructive Solid Geometry

Sixth 3D allows performing boolean operations against geometry shapes. So one can subtract, unionize or intersect shapes.

To understand CSG boolean operations, read more about Constructive Solid Geometry.

3.12. Winding Order & Backface Culling

The order in which a triangle's vertices are listed determines its winding order. In Sixth 3D, screen coordinates have Y-axis pointing down, which inverts the apparent winding direction compared to standard mathematical convention (Y-up). Counter-clockwise (CCW) in screen space means front-facing. Backface culling skips rendering triangles that face away from the camera — a major performance optimization.

- CCW winding (in screen space) → front face (visible)

- CW winding (in screen space) → back face (culled)

- When viewing a polygon from outside: define vertices in counter-clockwise order as seen from the camera

- Saves ~50% of triangle rendering

- Implementation uses signed area:

signedArea < 0means front-facing (in Y-down screen coordinates, negative signed area corresponds to visually CCW winding)

In Sixth 3D, backface culling is optional and disabled by default. Enable it per-shape:

- SolidPolygon.setBackfaceCulling(true)

- TexturedTriangle.setBackfaceCulling(true)

- AbstractCompositeShape.setBackfaceCulling(true) (applies to all sub-shapes)

See the Winding Order demo for an interactive visualization.

3.13. Working with Colors

Sixth 3D uses its own Color class instead of java.awt.Color. This custom implementation is designed specifically for the engine's software rasterizer, where avoiding object allocation during rendering is critical for performance. When rendering thousands of polygons per frame, creating new Color instances for each one would generate excessive garbage and trigger frequent garbage collection pauses. Instead, the engine's Color class uses mutable fields that can be reused across frames.

The class stores RGBA components as public integer fields in the range 0–255. This format matches the engine's pixel buffer layout and avoids costly float-to-int conversions during rasterization. The r, g, b, and a fields are accessible directly, allowing lighting calculations and alpha blending to modify colors in-place without allocating new objects. For example, the SolidPolygon class maintains a reusable shadedColor field that gets updated during each frame's lighting calculation instead of creating a new Color instance per polygon.

Color provides several constructors for different input formats. The

most common approach is using hex strings via Color.hex(String) or the

String constructor, which support formats like "F80" (3-digit RGB),

"FF8800" (6-digit RGB), "F808" (4-digit RGBA), and "FF8800CC"

(8-digit RGBA). You can also create colors from integer RGBA

components (0–255) using new Color(r, g, b, a), from floating-point

components (0.0–1.0) via new Color(double r, double g, double b,

double a), or from a packed RGB integer like 0xFF8800 using new

Color(int rgb). The class also provides predefined constants for

common colors: Color.RED, Color.GREEN, Color.BLUE, Color.YELLOW,

Color.CYAN, Color.MAGENTA, Color.WHITE, Color.BLACK, and

Color.TRANSPARENT.

The set(int r, int g, int b, int a) method modifies a Color in-place

and returns this for method chaining, which is essential for

performance during rendering. For example, the LightingManager

calculates lighting contributions from all light sources and stores

the final shaded color directly into a reusable Color instance via

set(), avoiding any allocation. The toAwtColor() method converts a

Sixth 3D Color to a java.awt.Color when needed for Java2D graphics

operations, caching the result to avoid repeated conversion. The

toInt() method packs the color into an ARGB integer suitable for the

engine's pixel buffer, used during rasterization to write pixels

directly.

import eu.svjatoslav.sixth.e3d.renderer.raster.Color; import static eu.svjatoslav.sixth.e3d.renderer.raster.Color.hex; // Using predefined color constants Color red = Color.RED; Color transparent = Color.TRANSPARENT; // Create from hex string (recommended for clarity) Color orange = hex("FF8800"); // RGB, fully opaque Color semiTransparent = hex("FF880080"); // RGBA, 50% transparent // Create from integer components (0-255) Color custom = new Color(255, 128, 64, 200); // Create from packed RGB integer Color packed = new Color(0xFF8800); // Modify existing color in-place (no allocation) Color reusable = new Color(); reusable.set(100, 200, 50, 255); // Convert to AWT color for Java2D operations java.awt.Color awtColor = custom.toAwtColor(); // Use in lighting calculations (LightingManager modifies in-place) // See the Shading & Lighting documentation for details

The alpha component controls transparency during rendering. A value of

0 makes the color fully transparent, while 255 makes it fully

opaque. The rasterizer implements alpha blending during the paint

phase: when drawing a semi-transparent pixel, the engine blends the

source color with the existing background pixel proportionally based

on the alpha value. The TextureBitmap.drawPixel() method handles this

blending, multiplying source colors by alpha and background colors by

(255 - alpha), then combining them. You can test whether a color is

fully transparent using isTransparent(), which returns true when alpha

equals zero.

For lighting calculations, the LightSource class uses Color to represent the color and intensity of emitted light. Multiple light sources contribute to the final shaded color of each polygon, as described in the Shading & Lighting documentation. The ambient light provides base illumination that affects all surfaces equally, regardless of orientation. Colors are also used for wireframe rendering via the Line class, where the color field determines the line's appearance.

4. Developer tools

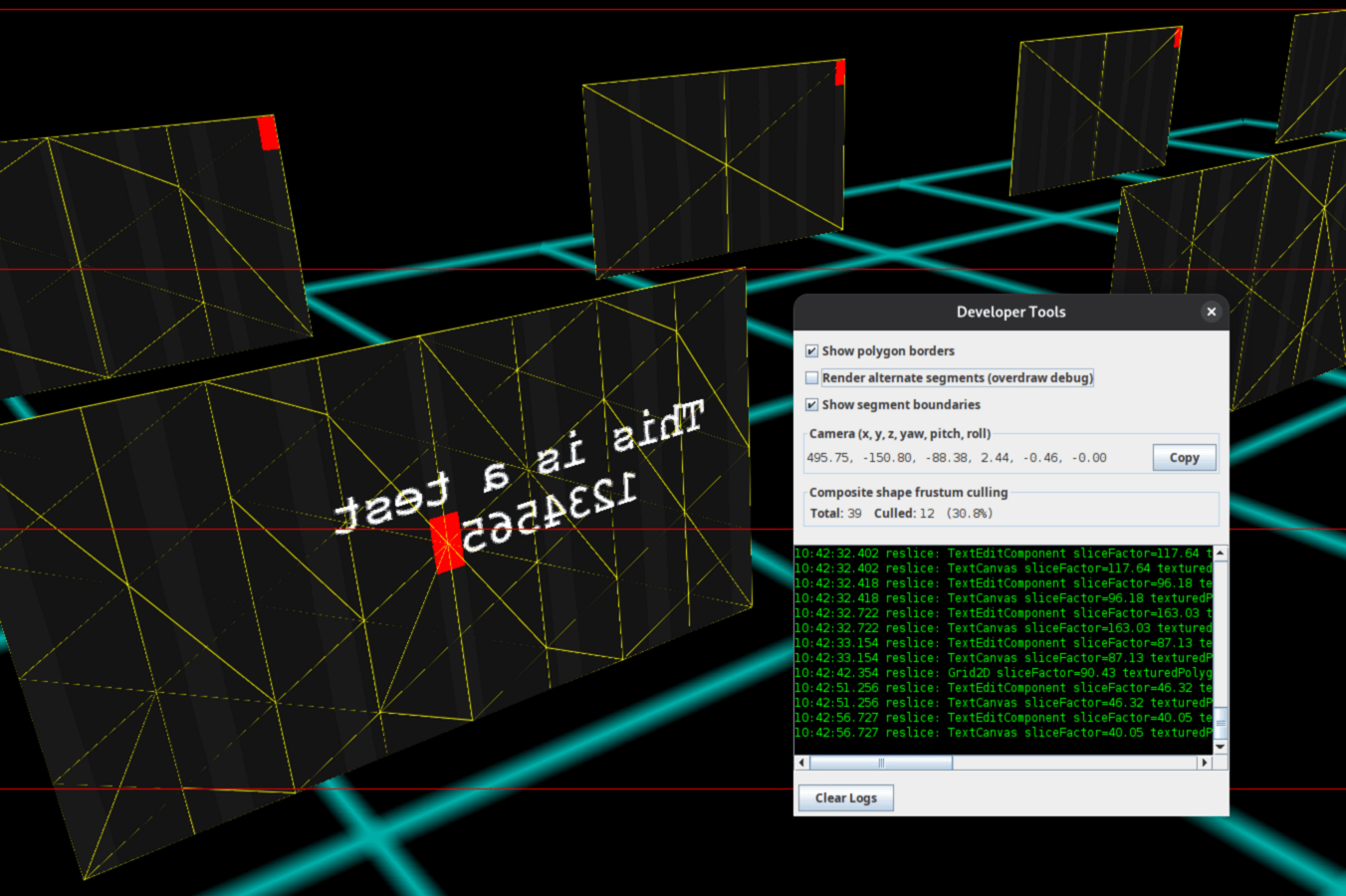

Press F12 anywhere in the application to open the Developer Tools panel:

This debugging interface helps you understand what the engine is doing internally and diagnose rendering issues.

4.1. Show polygon borders

When enabled, each TexturedTriangle draws yellow outlines around its three edges after rendering its texture content. This overlays the geometric structure of tessellated textured surfaces onto the final image.

The feature exists primarily to visualize adaptive tessellation — the recursive subdivision of large triangles into smaller pieces for perspective-correct texture mapping. When you enable this option on a scene with tessellation active, you can see how the TexturedPolygonTessellator has split each original triangle. Dense yellow lines indicate high tessellation (many small triangles), while sparse lines show low tessellation or triangles that were small enough to render without subdivision.

Use this visualization when investigating:

- Tessellation behavior: verify that near surfaces are subdivided more densely

- Texture distortion: compare the tessellation density against visible warping in the texture

4.2. Render alternate segments (overdraw debug)

Renders only even-numbered horizontal segments (0, 2, 4, 6) while leaving odd segments (1, 3, 5, 7) black.

The engine divides the screen into 8 horizontal segments for parallel multi-threaded rendering. This toggle helps detect overdraw (threads writing outside their allocated segment).

If you see rendering artifacts in the black segments, it indicates that threads are writing pixels outside their assigned area — a clear sign of a bug.

4.3. Show segment boundaries

Draws visible lines between horizontal rendering segments to show where the screen is divided for parallel multi-threaded rendering.

The engine divides the screen into 8 horizontal segments for parallel rendering. This toggle draws boundary lines between segments, making it easy to see exactly where each thread's rendered area begins and ends.

Useful for:

- Verifying correct segment division

- Debugging segment-related rendering issues

- Understanding the parallel rendering architecture visually

4.4. Camera position

Displays the current camera coordinates and orientation in real-time:

| Parameter | Description |

|---|---|

| x, y, z | Camera position in 3D world space |

| yaw | Rotation around the Y axis (left/right) |

| pitch | Rotation around the X axis (up/down) |

| roll | Rotation around the Z axis (tilt) |

The Copy button copies the full camera position string to the clipboard in a format ready to paste into bug reports or configuration files.

Use this for:

- Reporting exact camera positions when filing bugs

- Saving interesting viewpoints for later reference

- Understanding camera movement during navigation

- Sharing specific views with other developers

Example copied format:

500.00, -300.00, -800.00, 0.60, -0.50, -0.00

4.5. Frustum culling statistics

Shows real-time statistics about composite shape frustum culling efficiency:

| Statistic | Description |

|---|---|

| Total | Number of composite shapes tested against the frustum |

| Culled | Number of composites rejected (outside view frustum) |

| Culled % | Percentage of composites that were culled (0-100%) |

What is frustum culling?

Frustum culling is an optimization that skips rendering objects outside the camera's view. Before rendering each composite shape, the engine tests its bounding box against the view frustum. If the bounding box is completely outside the visible area, the entire composite (and all its children) are skipped.

How to interpret the numbers:

- High cull % (60-90%): Excellent — most objects are being correctly culled

- Medium cull % (20-60%): Moderate — some optimization benefit

- Low cull % (0-20%): Limited benefit — either all objects are visible, or scene needs restructuring

Example:

Total: 473 Culled: 425 (89.9%)

This means 473 composite shapes were tested, 425 were outside the view and skipped entirely, and only 48 composites (with all their children) actually needed to be rendered. This is excellent culling efficiency.

The statistics update every 200ms while the panel is open. Note that the root composite is never frustum-tested (it's always rendered), so the "Total" count excludes it.

4.6. Live log viewer

The scrollable text area shows captured debug output in real-time:

- Green text on black background for readability

- Auto-scrolls to show latest entries

- Updates every 500ms while panel is open

- Captures logs even when panel is closed (replays when reopened)

Use the Clear Logs button to reset the log buffer for fresh diagnostic captures.

5. Source code

This program is free software: released under Creative Commons Zero (CC0) license

Program author:

- Svjatoslav Agejenko

- Homepage: https://svjatoslav.eu

- Email: mailto://svjatoslav@svjatoslav.eu

- See also: Other software projects hosted at svjatoslav.eu

Getting the source code:

- Download latest source code snapshot in TAR GZ format

- Browse Git repository online

Clone Git repository using command:

git clone https://www3.svjatoslav.eu/git/sixth-3d.git